As the technology landscape evolves, the integration of artificial intelligence (generative or not) into user experience (UX) is becoming more and more common. AI integrations can manifest as explicit features. They can also integrate seamlessly into the core functionality of a product. However, developing an effective “AI-native” UX requires a delicate balance between the capabilities of AI models (Large Language Models, predictive AI, etc.) and user expectations.

This article delves into the nuances of “native AI” UX and explores:

- The spectrum of human-computer interaction

- Key considerations in integrating AI functionalities into digital products

- Emerging opportunities to improve user experience

The power of suggestion and feedback

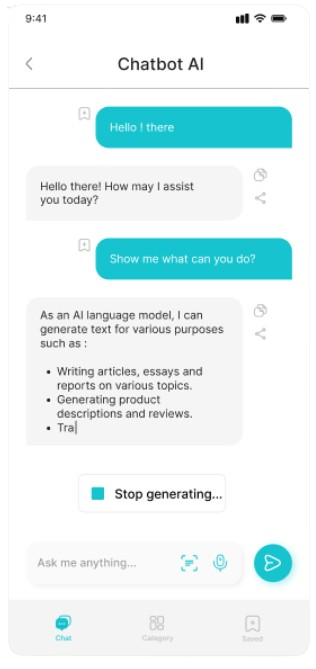

Conversation-based interfaces, such as chats and virtual assistants, offer a new dimension of UX. In fact, users can converse in a completely personalized way with AI.

However, flexibility brings many challenges. Users may find it difficult to understand the capabilities and limitations of AI. Without clear guidance, users can find themselves experiencing the digital equivalent of blank page anxiety, unsure of how to interact effectively.

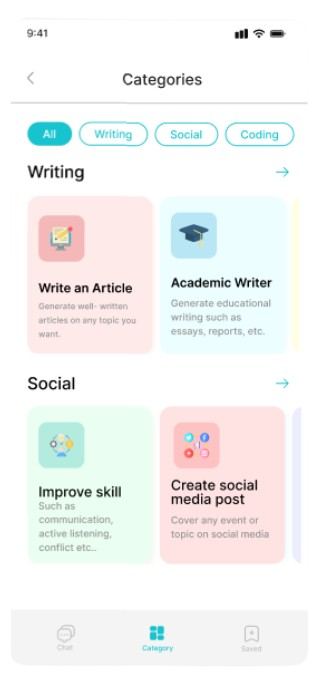

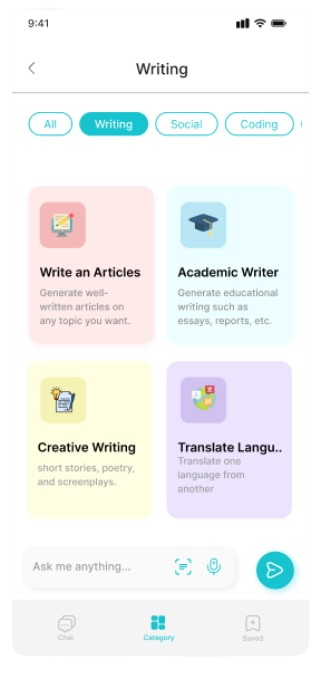

To resolve this problem, integration of suggestion prompts is very useful. It eliminates the need for user action to discover data they can chat with. The idea is to incorporate the suggestions contextually, just when the user might be looking for inspiration for the next question. This significantly improves accessibility and smoothness of interaction.

Furthermore the concept of feedback is essential. She provides practical examples to guide the user, which helps prevent possible errors. Consider using real-time feedback! It is similar to an entry wizard, like what is used for creating complex passwords. Some sites provide real-time guidance as you type to help achieve the required criteria. This approach is part of the application of a fundamental principle of user experience: error prevention, which is one of the heuristics of Nielsen for UX evaluation.

This will require favor contextual education. We are then talking about integrating advice and tips in a fluid and intuitive way, through tooltips or discreet micro-animations for example. This strategy is recommended by Amelie Bouchez as part of developing an optimized user experience for AI-based systems.

UX minimizes the weaknesses of AI

Despite its impressive capabilities, the consumer application Chat GPT has not really shaken up the business world. This is mainly due to the lack of verifiability of its results. Conversely, Copilot has become a standard for developers thanks to its ease of checking and modifying results. It thus embodies the principle of verifiability of information.

Rather than aiming for flawless perfection, it is more judicious to reduce the cost of errors. To do this, it is necessary to design a system where these are easily identifiable and correctable for the user.

By putting verifiability at the forefront, we can create a user experience where errors are easily identifiable and rectifiable. This is possible even before working on improving the performance of the AI model. Thus, a successful user experience will be able to surpass the capabilities of your AI model!

The integration of AI into digital products will require designers to find a delicate balance. It is crucial to match the advanced capabilities of AI models with the specific needs and context of each user. Although AI can offer exceptional performance in natural language interaction, data analysis and image recognition, it also has its limits. These limitations may include difficulty understanding ambiguous language or correctly interpreting very specific semantic contexts.

To effectively navigate these waters, UX Designers must create user interfaces that:

- exploit the strengths of AI

- minimize the impact of its weaknesses.

That means develop experiences that intuitively guide users to the most reliable and effective features of AI, while providing them with the necessary tools to avoid or correct potential errors.

For example, if an AI system is particularly good at recommending content based on user preferences, the interface could highlight those recommendations. It can also easily allow users to modify the selection criteria if the results are not satisfactory. Likewise, if the AI has difficulty interpreting complex queries, the interface could encourage users to formulate their requests in simpler ways or offer examples of effective queries.

AI as co-pilot

The interaction between the user and an LLM covers a wide range of possibilities. This requires a deep understanding of what users expect and need. Different users have varying expectations from a system using AI. VSThose who are directly involved in specific tasks or problems might want to have some level of control over the AI's functionality.

For example, they might want to personalize how and when AI intervenes in their work. In contrast, users less engaged in using the application might prefer an interaction where the AI acts more autonomously, making decisions and carrying out tasks without constant human intervention.

Between these two approaches is often an intermediate approach where AI acts as a co-pilot, actively collaborating with users to optimize results.

Responsive UX

Additionally, it is important to recognize that users' relationship with AI is not static. As they become more familiar with the capabilities and limitations of AI, their expectations and needs may change. This underlines the need for UX designers to create interfaces and user journeys that adapt to this evolution.

For example, when starting to use an AI system, a user may need:

- step by step guides

- more visible assistance in familiarizing yourself with the system.

But as the user gains confidence and understanding, the interface can evolve to provide more autonomy and less direct guidance. This allows it to take full advantage of AI capabilities.

This amounts to creating a protean or modular interface, capable of evolving over the course of interactions with the user. In this context, UX design work mainly focuses on architecture, usage flow and the creation of flexible models. Thus, there is not a single interface, but thousands of possible combinations. They allow the user to personalize the product based on their interactions. In this model, the designer does not always predict precisely which interface will be generated. It emphasizes a highly customizable and dynamic user experience. However, it will require detailed monitoring of uses and continued attention to user feedback.

Examples of integrating AI into digital products

Approach 1: direct entry possibility

Description

The first approach focuses on an interface where users give direct instructions to AI models. In this framework, users create instructions, whether sequential, iterative or not, to generate text, images, videos and other content. Notable examples include OpenAI's ChatGPT interface and Midjourney's use of Discord as an input channel.

Points of attention

In the absence of explicit interfaces (buttons, call to action, etc.), these systems must respond agilely to a variety of user instructions. The goal is to minimize frustration and optimize their usefulness. It is crucial to properly frame the discussion and understand the limitations and capabilities of these AI models. Indeed, this helps ensure a smooth user experience and optimal results.

Approach 2: Implicit integration of AI

Description of the approach

In contrast to the direct input paradigm, the second approach bypasses explicit user interface elements to control the outputs of the AI model. AI instructions happen in the background as users interact with specific elements of the app interface. This promotes fluid and discreet integration, similar to recommendation algorithms on an e-commerce site.

Points of attention

Despite its subtlety, this approach requires meticulous orchestration. Indeed, it is necessary to ensure that the results proposed by the AI correspond to user expectations and the objectives of the application. By reconciling transparency and functionality, designers must create intuitive interfaces. These must exploit the capabilities of AI without compromising user autonomy or understanding.

Approach 3: AI-assisted application-specific user interface

Description of the approach

The third approach combines user interface elements with AI assistance. Users can then create instructions through a mix of direct model instructions and user interface controls. Microsoft's Copilot is an example. It seamlessly integrates AI capabilities into the GitHub, Office, and Windows ecosystems.

Points of attention

This hybrid approach requires complex collaboration between AI models and application-specific UI elements. This helps facilitate a cohesive user experience. Designers must navigate the complexities of AI’s assistive layers. They can thus ensuring a seamless integration that amplifies functionality, efficiency and user satisfaction.

Conclusion

As technology evolves, integrating artificial intelligence into UX is becoming more and more common. However, creating an effective “native AI” UX requires a particular balance. This moves to the capabilities of AI models, to user expectations.

When examining the impact of suggestions and feedback, it is essential to guide users in their interaction with conversational interfaces. Their incorporation in real time can greatly improve the experience and reduce errors. This would encourage a fluid interaction. Additionally, it is important to highlight the crucial role of UX in mitigating AI's weaknesses. Rather than aiming for perfection, we must design systems where errors are easily identifiable and correctable. This thus provides a successful user experience, even beyond the capabilities of AI. By carefully navigating AI advances and user needs, UX designers can create interfaces that leverage AI's strengths while minimizing its limitations. All of this helps pave the way for innovative and adaptive user experiences.

Guillaume Lanthier, Practice Manager AI & Data at Smile